Уровень аппаратной абстракции камеры Android (HAL) соединяет API-интерфейсы платформы камеры более высокого уровня в android.hardware.camera2 с базовым драйвером камеры и оборудованием. Android 8.0 представил Treble , переключив CameraHal API на стабильный интерфейс, определяемый языком описания интерфейса HAL (HIDL). Если вы уже разрабатывали модуль HAL камеры и драйвер для Android 7.0 и более ранних версий, обратите внимание на значительные изменения в конвейере камеры.

Возможности камеры HAL3

Цель редизайна Android Camera API — существенно расширить возможности приложений по управлению подсистемой камеры на устройствах Android, а также реорганизовать API, чтобы сделать его более эффективным и удобным в сопровождении. Дополнительный элемент управления упрощает создание высококачественных приложений для камер на устройствах Android, которые могут надежно работать с несколькими продуктами, при этом по возможности используя алгоритмы для конкретных устройств, чтобы максимизировать качество и производительность.

Версия 3 подсистемы камеры структурирует режимы работы в единый унифицированный вид, который можно использовать для реализации любого из предыдущих режимов и нескольких других, таких как режим серийной съемки. Это приводит к лучшему управлению фокусом и экспозицией, а также к дополнительной постобработке, такой как шумоподавление, контрастность и резкость. Кроме того, этот упрощенный вид облегчает разработчикам приложений использование различных функций камеры.

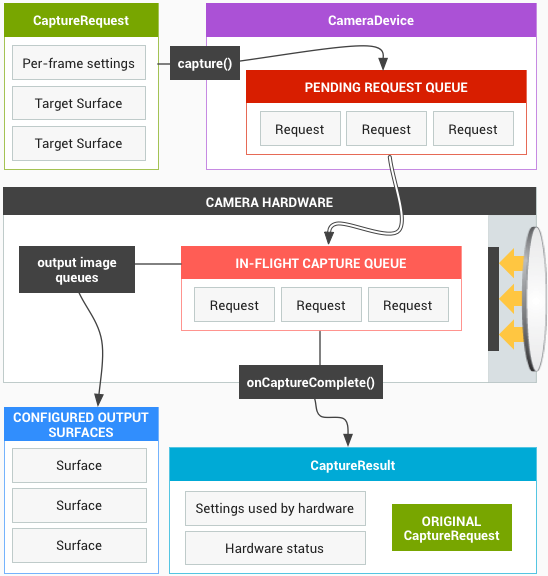

API моделирует подсистему камеры как конвейер, который преобразует входящие запросы на захват кадров в кадры в соотношении 1:1. Запросы инкапсулируют всю конфигурационную информацию о захвате и обработке кадра. Это включает в себя разрешение и формат пикселей; ручное управление сенсором, объективом и вспышкой; 3А режимы работы; управление обработкой RAW->YUV; генерация статистики; и так далее.

Проще говоря, платформа приложения запрашивает кадр у подсистемы камеры, а подсистема камеры возвращает результаты в выходной поток. Кроме того, для каждого набора результатов создаются метаданные, содержащие такую информацию, как цветовые пространства и затенение объектива. Вы можете думать о камере версии 3 как о конвейере к одностороннему потоку камеры версии 1. Он преобразует каждый запрос захвата в одно изображение, захваченное датчиком, которое обрабатывается в:

- Объект Result с метаданными о захвате.

- От одного до N буферов данных изображения, каждый в свою собственную целевую поверхность.

Набор возможных выходных Поверхностей предварительно настроен:

- Каждая поверхность является местом назначения для потока буферов изображений с фиксированным разрешением.

- Одновременно в качестве выходов можно настроить только небольшое количество поверхностей (~3).

Запрос содержит все желаемые параметры захвата и список выходных поверхностей, в которые будут помещены буферы изображений для этого запроса (из общего настроенного набора). Запрос может быть одноразовым (с помощью функции capture() ) или повторяться бесконечно (с помощью setRepeatingRequest() ). Захваты имеют приоритет над повторяющимися запросами.

Рисунок 1. Модель работы ядра камеры

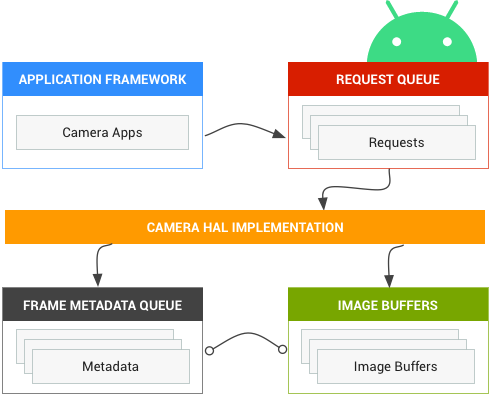

Обзор камеры HAL1

Версия 1 подсистемы камеры была спроектирована как черный ящик с высокоуровневым управлением и следующими тремя режимами работы:

- Предварительный просмотр

- Видеозапись

- Все еще захват

Каждый режим имеет немного разные и частично совпадающие возможности. Это затруднило реализацию новых функций, таких как пакетный режим, который находится между двумя режимами работы.

Рисунок 2. Компоненты камеры

Android 7.0 продолжает поддерживать камеру HAL1, так как многие устройства все еще полагаются на нее. Кроме того, служба камеры Android поддерживает реализацию обоих HAL (1 и 3), что полезно, если вы хотите поддерживать менее мощную фронтальную камеру с камерой HAL1 и более продвинутую заднюю камеру с камерой HAL3.

Существует модуль HAL с одной камерой (с собственным номером версии ), в котором перечислены несколько независимых камерных устройств, каждое из которых имеет собственный номер версии. Модуль камеры 2 или новее требуется для поддержки устройств 2 или новее, и такие модули камер могут иметь разные версии устройств камеры (это то, что мы имеем в виду, когда говорим, что Android поддерживает реализацию обоих HAL).