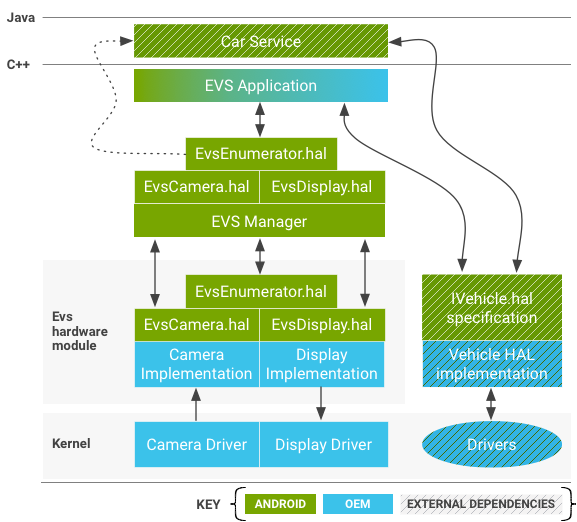

Android 包含一個汽車 HIDL 硬件抽象層 (HAL),可在 Android 啟動過程的早期提供圖像捕獲和顯示,並在系統的整個生命週期內繼續運行。 HAL 包括外景系統 (EVS) 堆棧,通常用於支持配備基於 Android 的車載信息娛樂 (IVI) 系統的車輛中的後視攝像頭和環視顯示器。 EVS 還支持在用戶應用程序中實現高級功能。

Android 還包括一個特定於 EVS 的捕獲和顯示驅動程序接口(在/hardware/interfaces/automotive/evs/1.0中)。雖然可以在現有的 Android 攝像頭和顯示服務之上構建後視攝像頭應用程序,但這樣的應用程序可能在 Android 啟動過程中運行得太晚。使用專用 HAL 可實現流線型接口,並明確 OEM 需要實施什麼來支持 EVS 堆棧。

系統組件

EVS 包括以下系統組件:

電動車應用

示例 C++ EVS 應用程序 ( /packages/services/Car/evs/app ) 用作參考實現。此應用程序負責從 EVS 管理器請求視頻幀並將完成的幀發送回 EVS 管理器以進行顯示。它預計將在 EVS 和 Car Service 可用時由 init 啟動,目標是在通電後的兩 (2) 秒內。 OEM 可以根據需要修改或替換 EVS 應用程序。

EVS 管理器

EVS 管理器 ( /packages/services/Car/evs/manager ) 提供 EVS 應用程序所需的構建塊,以實現從簡單的後視攝像頭顯示到 6DOF 多攝像頭渲染的任何內容。它的接口通過 HIDL 呈現,並且可以接受多個並發客戶端。其他應用程序和服務(特別是 Car Service)可以查詢 EVS 管理器狀態以了解 EVS 系統何時處於活動狀態。

EVS HIDL 接口

EVS 系統,包括攝像頭和顯示元素,都在android.hardware.automotive.evs包中定義。 /hardware/interfaces/automotive/evs/1.0/default中提供了一個練習接口的示例實現(生成合成測試圖像並驗證圖像進行往返)。

OEM 負責實現由/hardware/interfaces/automotive/evs中的 .hal 文件表示的 API。此類實現負責配置和收集來自物理相機的數據,並通過 Gralloc 可識別的共享內存緩衝區傳遞數據。實現的顯示端負責提供可由應用程序填充的共享內存緩衝區(通常通過 EGL 渲染),並優先呈現已完成的幀,而不是任何其他可能希望出現在物理顯示器上的幀。 EVS 接口的供應商實現可以存儲在/vendor/… /device/…或hardware/…下(例如/hardware/[vendor]/[platform]/evs )。

內核驅動程序

支持 EVS 堆棧的設備需要內核驅動程序。 OEM 可以選擇通過現有的相機和/或顯示硬件驅動程序支持 EVS 所需的功能,而不是創建新的驅動程序。重用驅動程序可能是有利的,特別是對於圖像呈現可能需要與其他活動線程協調的顯示驅動程序。 Android 8.0 包含一個基於 v4l2 的示例驅動程序(位於packages/services/Car/evs/sampleDriver ),該驅動程序依賴於內核以提供 v4l2 支持,並依賴於 SurfaceFlinger 以呈現輸出圖像。

EVS硬件接口說明

該部分描述了 HAL。供應商應提供適用於其硬件的此 API 的實現。

IEvsEnumerator

該對象負責枚舉系統中可用的 EVS 硬件(一個或多個攝像頭和單個顯示設備)。

getCameraList() generates (vec<CameraDesc> cameras);

返回一個向量,其中包含系統中所有攝像機的描述。假設這組攝像機在啟動時是固定的並且是可知的。有關相機描述的詳細信息,請參閱CameraDesc 。

openCamera(string camera_id) generates (IEvsCamera camera);

獲取一個接口對象,用於與唯一的camera_id字符串標識的特定相機進行交互。失敗時返回 NULL。嘗試重新打開已打開的攝像頭不會失敗。為避免與應用程序啟動和關閉相關的競爭條件,重新打開相機應關閉前一個實例,以便可以滿足新請求。以這種方式被搶占的相機實例必須處於非活動狀態,等待最終銷毀並響應任何影響相機狀態的請求,返回碼為OWNERSHIP_LOST 。

closeCamera(IEvsCamera camera);

釋放 IEvsCamera 接口(與openCamera()調用相反)。在調用 closeCamera 之前,必須通過調用closeCamera stopVideoStream()來停止攝像頭視頻流。

openDisplay() generates (IEvsDisplay display);

獲取一個接口對象,用於與系統的 EVS 顯示器進行獨占交互。一次只能有一個客戶端持有 IEvsDisplay 的功能實例。與openCamera中描述的激進打開行為類似,可以隨時創建一個新的 IEvsDisplay 對象,並將禁用任何先前的實例。無效的實例繼續存在並響應其所有者的函數調用,但在死亡時必須不執行任何變異操作。最終,客戶端應用程序預計會注意到OWNERSHIP_LOST錯誤返回代碼並關閉並釋放非活動接口。

closeDisplay(IEvsDisplay display);

釋放 IEvsDisplay 接口(與openDisplay()調用相反)。通過getTargetBuffer()調用接收的未完成緩衝區必須在關閉顯示之前返回到顯示。

getDisplayState() generates (DisplayState state);

獲取當前顯示狀態。 HAL 實現應該報告實際的當前狀態,這可能與最近請求的狀態不同。負責改變顯示狀態的邏輯應該存在於設備層之上,這使得 HAL 實現不希望自發改變顯示狀態。如果顯示當前未被任何客戶端(通過調用 openDisplay)持有,則此函數返回NOT_OPEN 。否則,它會報告 EVS 顯示的當前狀態(請參閱IEvsDisplay API )。

struct CameraDesc {

string camera_id;

int32 vendor_flags; // Opaque value

}

-

camera_id。唯一標識給定相機的字符串。可以是設備的內核設備名稱,也可以是設備的名稱,例如後視圖。此字符串的值由 HAL 實現選擇,並由上面的堆棧不透明地使用。 -

vendor_flags。一種將專用相機信息從驅動程序不透明地傳遞到自定義 EVS 應用程序的方法。它從驅動程序未經解釋地傳遞到 EVS 應用程序,它可以隨意忽略它。

IEvs相機

該對象表示單個攝像機,是捕獲圖像的主要接口。

getCameraInfo() generates (CameraDesc info);

返回此相機的CameraDesc 。

setMaxFramesInFlight(int32 bufferCount) generates (EvsResult result);

指定要求相機支持的緩衝區鏈的深度。 IEvsCamera 的客戶端最多可以同時保存這麼多幀。如果已經將這麼多幀傳遞給接收器而沒有被doneWithFrame返回,則流會跳過幀,直到返回緩衝區以供重用。任何時候進行此調用都是合法的,即使流已經在運行,在這種情況下,應根據需要從鏈中添加或刪除緩衝區。如果沒有調用這個入口點,IEvsCamera默認支持至少一幀;更能接受。

如果請求的 bufferCount 無法容納,則該函數返回BUFFER_NOT_AVAILABLE或其他相關錯誤代碼。在這種情況下,系統將繼續以先前設置的值運行。

startVideoStream(IEvsCameraStream receiver) generates (EvsResult result);

請求從此相機傳送 EVS 相機幀。 IEvsCameraStream 開始接收帶有新圖像幀的定期調用,直到調用stopVideoStream() 。幀必須在startVideoStream調用的 500 毫秒內開始傳送,並且在開始後,必須以至少 10 FPS 的速度生成。啟動視頻流所需的時間有效計入任何後視攝像頭啟動時間要求。如果流沒有啟動,則必須返回錯誤碼;否則返回 OK。

oneway doneWithFrame(BufferDesc buffer);

返回由 IEvsCameraStream 傳遞的幀。使用完傳遞給 IEvsCameraStream 接口的幀後,必須將幀返回給 IEvsCamera 以供重用。可用的緩衝區數量有限(可能只有一個),如果供應用盡,則在返回緩衝區之前不會傳送更多幀,可能導致跳過幀(具有空句柄的緩衝區表示結束流,不需要通過此函數返回)。成功時返回 OK,或可能包括INVALID_ARG或BUFFER_NOT_AVAILABLE的適當錯誤代碼。

stopVideoStream();

停止傳送 EVS 相機幀。由於傳遞是異步的,因此在此調用返回後,幀可能會繼續到達一段時間。必須返回每一幀,直到向 IEvsCameraStream 發出流的關閉信號。在已經停止或從未啟動的流上調用stopVideoStream是合法的,在這種情況下它會被忽略。

getExtendedInfo(int32 opaqueIdentifier) generates (int32 value);

從 HAL 實現請求特定於驅動程序的信息。 opaqueIdentifier允許的值是特定於驅動程序的,但沒有傳遞的值可能會使驅動程序崩潰。驅動程序應為任何無法識別的opaqueIdentifier返回 0。

setExtendedInfo(int32 opaqueIdentifier, int32 opaqueValue) generates (EvsResult result);

將特定於驅動程序的值發送到 HAL 實現。提供此擴展只是為了方便特定於車輛的擴展,並且任何 HAL 實現都不應要求此調用在默認狀態下運行。如果驅動程序識別並接受這些值,則應返回 OK;否則應返回INVALID_ARG或其他有代表性的錯誤代碼。

struct BufferDesc {

uint32 width; // Units of pixels

uint32 height; // Units of pixels

uint32 stride; // Units of pixels

uint32 pixelSize; // Size of single pixel in bytes

uint32 format; // May contain values from android_pixel_format_t

uint32 usage; // May contain values from Gralloc.h

uint32 bufferId; // Opaque value

handle memHandle; // gralloc memory buffer handle

}

描述通過 API 傳遞的圖像。 HAL 驅動器負責填寫此結構以描述圖像緩衝區,HAL 客戶端應將此結構視為只讀。這些字段包含足夠的信息以允許客戶端重建ANativeWindowBuffer對象,這可能需要通過eglCreateImageKHR()擴展將圖像與 EGL 一起使用。

-

width。呈現圖像的寬度(以像素為單位)。 -

height。呈現圖像的高度(以像素為單位)。 -

stride。每行在內存中實際佔用的像素數,考慮到行對齊的任何填充。以像素表示,以匹配 gralloc 對其緩衝區描述採用的約定。 -

pixelSize。每個單獨像素佔用的字節數,可以計算在圖像中的行之間步進所需的字節大小(以字節為單位的stride= 以像素為單位的stride*pixelSize)。 -

format。圖像使用的像素格式。提供的格式必須與平台的 OpenGL 實現兼容。要通過兼容性測試,相機使用應首選HAL_PIXEL_FORMAT_YCRCB_420_SP,顯示應首選RGBA或BGRA。 -

usage。 HAL 實現設置的使用標誌。 HAL 客戶端應通過這些未修改的(有關詳細信息,請參閱Gralloc.h相關標誌)。 -

bufferId。由 HAL 實現指定的唯一值,允許在通過 HAL API 往返之後識別緩衝區。 HAL 實現可以任意選擇存儲在該字段中的值。 -

memHandle。包含圖像數據的底層內存緩衝區的句柄。 HAL 實現可能會選擇在此處存儲 Gralloc 緩衝區句柄。

IEvsCameraStream

客戶端實現此接口以接收異步視頻幀傳送。

deliverFrame(BufferDesc buffer);

每次視頻幀準備好進行檢查時,都會收到來自 HAL 的調用。此方法接收的緩衝區句柄必須通過調用IEvsCamera::doneWithFrame()來返回。當通過調用IEvsCamera::stopVideoStream()停止視頻流時,此回調可能會隨著管道耗盡而繼續。仍然必須返回每一幀;當流中的最後一幀已交付時,將交付 NULL bufferHandle,表示流的結束並且不會發生進一步的幀交付。 NULL bufferHandle 本身不需要通過doneWithFrame()發回,但必須返回所有其他句柄

雖然專有緩衝區格式在技術上是可行的,但兼容性測試要求緩衝區採用以下四種支持格式之一:NV21 (YCrCb 4:2:0 Semi-Planar)、YV12 (YCrCb 4:2:0 Planar)、YUYV (YCrCb 4: 2:2 交錯)、RGBA(32 位 R:G:B:x)、BGRA(32 位 B:G:R:x)。所選格式必須是平台 GLES 實現上的有效 GL 紋理源。

應用程序不應依賴bufferId字段和memHandle結構中的BufferDesc之間的任何對應關係。 bufferId值本質上是 HAL 驅動程序實現私有的,它可以在它認為合適的時候使用(和重用)它們。

IEvsDisplay

該對象代表 Evs 顯示器,控制顯示器的狀態,並處理圖像的實際呈現。

getDisplayInfo() generates (DisplayDesc info);

返回有關係統提供的 EVS 顯示的基本信息(請參閱DisplayDesc )。

setDisplayState(DisplayState state) generates (EvsResult result);

設置顯示狀態。客戶端可以設置顯示狀態以表達所需的狀態,並且 HAL 實現必須在處於任何其他狀態時優雅地接受對任何狀態的請求,儘管響應可能是忽略該請求。

初始化後,顯示器被定義為以NOT_VISIBLE狀態啟動,之後客戶端將請求VISIBLE_ON_NEXT_FRAME狀態並開始提供視頻。當不再需要顯示時,客戶端應該在通過最後一個視頻幀後請求NOT_VISIBLE狀態。

在任何時候請求任何狀態都是有效的。如果顯示已經可見,如果設置為VISIBLE_ON_NEXT_FRAME ,它應該保持可見。除非請求的狀態是無法識別的枚舉值,否則總是返回 OK,在這種情況下返回INVALID_ARG 。

getDisplayState() generates (DisplayState state);

獲取顯示狀態。 HAL 實現應該報告實際的當前狀態,這可能與最近請求的狀態不同。負責改變顯示狀態的邏輯應該存在於設備層之上,這使得 HAL 實現不希望自發改變顯示狀態。

getTargetBuffer() generates (handle bufferHandle);

返回與顯示關聯的幀緩衝區的句柄。該緩衝區可能被軟件和/或 GL 鎖定和寫入。即使顯示不再可見,也必須通過調用returnTargetBufferForDisplay()返回此緩衝區。

雖然專有緩衝區格式在技術上是可行的,但兼容性測試要求緩衝區採用以下四種支持格式之一:NV21 (YCrCb 4:2:0 Semi-Planar)、YV12 (YCrCb 4:2:0 Planar)、YUYV (YCrCb 4: 2:2 交錯)、RGBA(32 位 R:G:B:x)、BGRA(32 位 B:G:R:x)。所選格式必須是平台 GLES 實現上的有效 GL 渲染目標。

出錯時,將返回一個帶有空句柄的緩衝區,但不需要將這樣的緩衝區傳遞回returnTargetBufferForDisplay 。

returnTargetBufferForDisplay(handle bufferHandle) generates (EvsResult result);

告訴顯示器緩衝區已準備好顯示。只有通過調用getTargetBuffer()檢索到的緩衝區才可用於此調用,並且客戶端應用程序不得修改BufferDesc的內容。在此調用之後,緩衝區不再可供客戶端使用。成功時返回 OK,或可能包括INVALID_ARG或BUFFER_NOT_AVAILABLE的適當錯誤代碼。

struct DisplayDesc {

string display_id;

int32 vendor_flags; // Opaque value

}

描述 EVS 顯示的基本屬性以及 EVS 實現所需的屬性。 HAL 負責填寫這個結構來描述 EVS 顯示。可以是與其他演示設備重疊或混合的物理顯示器或虛擬顯示器。

-

display_id。唯一標識顯示的字符串。這可以是設備的內核設備名稱,也可以是設備的名稱,例如後視。此字符串的值由 HAL 實現選擇,並由上面的堆棧不透明地使用。 -

vendor_flags。一種將專用相機信息從驅動程序不透明地傳遞到自定義 EVS 應用程序的方法。它未經解釋地從驅動程序傳遞到 EVS 應用程序,它可以隨意忽略它。

enum DisplayState : uint32 {

NOT_OPEN, // Display has not been “opened” yet

NOT_VISIBLE, // Display is inhibited

VISIBLE_ON_NEXT_FRAME, // Will become visible with next frame

VISIBLE, // Display is currently active

DEAD, // Display is not available. Interface should be closed

}

描述 EVS 顯示器的狀態,可以禁用(駕駛員不可見)或啟用(向駕駛員顯示圖像)。包括顯示尚不可見但準備在通過returnTargetBufferForDisplay()調用傳遞下一幀圖像時變得可見的瞬態狀態。

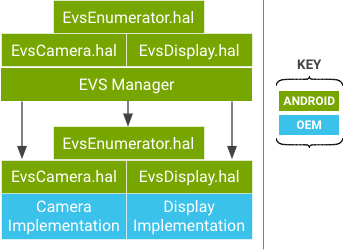

EVS 管理器

EVS 管理器為 EVS 系統提供公共接口,用於收集和呈現外部攝像機視圖。在硬件驅動程序只允許每個資源(相機或顯示器)使用一個活動接口的情況下,EVS 管理器促進了對相機的共享訪問。單個主 EVS 應用程序是 EVS 管理器的第一個客戶端,並且是唯一允許寫入顯示數據的客戶端(可以授予其他客戶端對相機圖像的只讀訪問權限)。

EVS Manager 實現與底層 HAL 驅動相同的 API,並通過支持多個並發客戶端提供擴展服務(多個客戶端可以通過 EVS Manager 打開一個攝像頭並接收視頻流)。

通過 EVS 硬件 HAL 實現或 EVS 管理器 API 操作時,應用程序看不到任何差異,除了 EVS 管理器 API 允許並發攝像機流訪問。 EVS 管理器本身是 EVS 硬件 HAL 層允許的客戶端,並充當 EVS 硬件 HAL 的代理。

以下部分僅描述在 EVS 管理器實現中具有不同(擴展)行為的調用;剩餘調用與 EVS HAL 描述相同。

IEvsEnumerator

openCamera(string camera_id) generates (IEvsCamera camera);

獲取一個接口對象,用於與唯一的camera_id字符串標識的特定相機進行交互。失敗時返回 NULL。在 EVS Manager 層,只要有足夠的系統資源可用,已經打開的攝像頭可能會被另一個進程再次打開,從而允許將視頻流發送到多個消費者應用程序。 EVS Manager 層的camera_id字符串與上報給 EVS Hardware 層的字符串相同。

IEvs相機

EVS 管理器提供的 IEvsCamera 實現是內部虛擬化的,因此一個客戶端對相機的操作不會影響其他客戶端,其他客戶端保留對其相機的獨立訪問權限。

startVideoStream(IEvsCameraStream receiver) generates (EvsResult result);

啟動視頻流。客戶端可以獨立啟動和停止同一底層攝像機上的視頻流。底層攝像頭在第一個客戶端啟動時啟動。

doneWithFrame(uint32 frameId, handle bufferHandle) generates (EvsResult result);

返回一個幀。每個客戶在完成後必須返回他們的框架,但可以根據需要保留他們的框架。當客戶端持有的幀計數達到其配置的限制時,它將不再接收任何幀,直到它返回一個。這種跳幀不會影響其他客戶端,它們會繼續按預期接收所有幀。

stopVideoStream();

停止視頻流。每個客戶端都可以隨時停止其視頻流,而不會影響其他客戶端。當給定相機的最後一個客戶端停止其流時,硬件層的底層相機流將停止。

setExtendedInfo(int32 opaqueIdentifier, int32 opaqueValue) generates (EvsResult result);

發送特定於驅動程序的值,可能使一個客戶端影響另一個客戶端。由於 EVS 管理器無法理解供應商定義的控製字的含義,因此它們沒有被虛擬化,並且任何副作用都適用於給定攝像機的所有客戶端。例如,如果供應商使用此調用來更改幀速率,則受影響的硬件層相機的所有客戶端都將以新的速率接收幀。

IEvsDisplay

即使在 EVS 管理員級別,也只允許顯示一位所有者。 Manager 不添加任何功能,只是將 IEvsDisplay 接口直接傳遞給底層 HAL 實現。

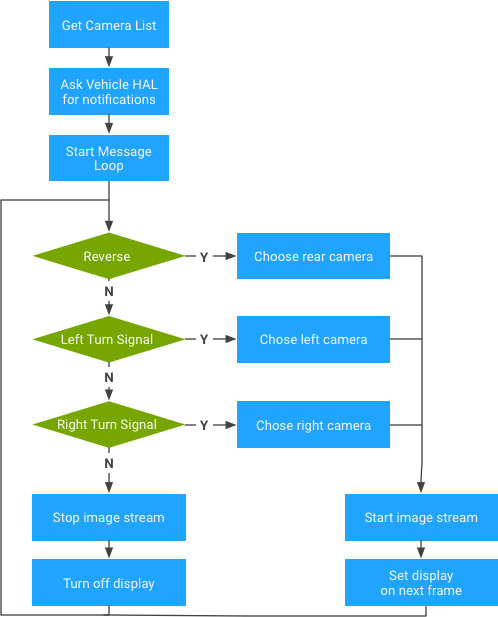

電動車應用

Android 包含 EVS 應用程序的原生 C++ 參考實現,該應用程序與 EVS 管理器和車輛 HAL 通信以提供基本的後視攝像頭功能。該應用程序預計將在系統啟動過程的早期啟動,根據可用的攝像頭和汽車的狀態(齒輪和轉向燈狀態)顯示合適的視頻。 OEM 可以使用自己的車輛特定邏輯和表示來修改或替換 EVS 應用程序。

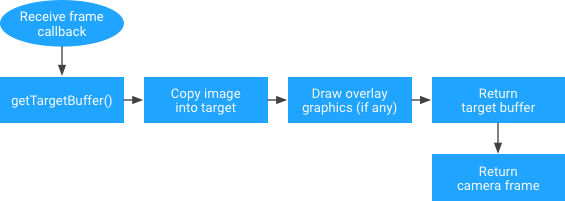

因為圖像數據在標準圖形緩衝區中呈現給應用程序,所以應用程序負責將圖像從源緩衝區移動到輸出緩衝區。雖然這引入了數據複製的成本,但它也為應用程序提供了以它想要的任何方式將圖像渲染到顯示緩衝區的機會。

例如,應用程序可以選擇移動像素數據本身,可能使用內聯縮放或旋轉操作。應用程序還可以選擇將源圖像用作 OpenGL 紋理並將復雜場景渲染到輸出緩衝區,包括圖標、指南和動畫等虛擬元素。更複雜的應用程序還可以選擇多個並發輸入攝像頭並將它們合併到單個輸出幀中(例如用於自上而下的車輛環境虛擬視圖)。

在 EVS 顯示 HAL 中使用 EGL/SurfaceFlinger

本部分介紹如何使用 EGL 在 Android 10 中呈現 EVS Display HAL 實現。

EVS HAL 參考實現使用 EGL 在屏幕上渲染相機預覽,並使用libgui創建目標 EGL 渲染表面。在 Android 8(及更高版本)中, libgui被歸類為VNDK-private ,它指的是供應商進程無法使用的 VNDK 庫可用的一組庫。由於 HAL 實現必須駐留在供應商分區中,因此阻止供應商在 HAL 實現中使用 Surface。

為供應商進程構建 libgui

使用libgui作為在 EVS 顯示 HAL 實現中使用 EGL/SurfaceFlinger 的唯一選項。實現libgui最直接的方法是直接通過frameworks/native/libs/gui在構建腳本中使用額外的構建目標。此目標與libgui目標完全相同,只是增加了兩個字段:

-

name -

vendor_available

cc_library_shared {

name: "libgui_vendor",

vendor_available: true,

vndk: {

enabled: false,

},

double_loadable: true,

defaults: ["libgui_bufferqueue-defaults"],

srcs: [

…

// bufferhub is not used when building libgui for vendors

target: {

vendor: {

cflags: [

"-DNO_BUFFERHUB",

"-DNO_INPUT",

],

…

注意:供應商目標是使用NO_INPUT宏構建的,該宏從包裹數據中刪除一個 32 位字。因為 SurfaceFlinger 期望這個字段已經被移除,所以 SurfaceFlinger 無法解析包裹。這被觀察為fcntl故障:

W Parcel : Attempt to read object from Parcel 0x78d9cffad8 at offset 428 that is not in the object list E Parcel : fcntl(F_DUPFD_CLOEXEC) failed in Parcel::read, i is 0, fds[i] is 0, fd_count is 20, error: Unknown error 2147483647 W Parcel : Attempt to read object from Parcel 0x78d9cffad8 at offset 544 that is not in the object list

要解決這種情況:

diff --git a/libs/gui/LayerState.cpp b/libs/gui/LayerState.cpp

index 6066421fa..25cf5f0ce 100644

--- a/libs/gui/LayerState.cpp

+++ b/libs/gui/LayerState.cpp

@@ -54,6 +54,9 @@ status_t layer_state_t::write(Parcel& output) const

output.writeFloat(color.b);

#ifndef NO_INPUT

inputInfo.write(output);

+#else

+ // Write a dummy 32-bit word.

+ output.writeInt32(0);

#endif

output.write(transparentRegion);

output.writeUint32(transform);

下面提供了示例構建說明。預計會收到$(ANDROID_PRODUCT_OUT)/system/lib64/libgui_vendor.so 。

$ cd <your_android_source_tree_top> $ . ./build/envsetup. $ lunch <product_name>-<build_variant> ============================================ PLATFORM_VERSION_CODENAME=REL PLATFORM_VERSION=10 TARGET_PRODUCT=<product_name> TARGET_BUILD_VARIANT=<build_variant> TARGET_BUILD_TYPE=release TARGET_ARCH=arm64 TARGET_ARCH_VARIANT=armv8-a TARGET_CPU_VARIANT=generic TARGET_2ND_ARCH=arm TARGET_2ND_ARCH_VARIANT=armv7-a-neon TARGET_2ND_CPU_VARIANT=cortex-a9 HOST_ARCH=x86_64 HOST_2ND_ARCH=x86 HOST_OS=linux HOST_OS_EXTRA=<host_linux_version> HOST_CROSS_OS=windows HOST_CROSS_ARCH=x86 HOST_CROSS_2ND_ARCH=x86_64 HOST_BUILD_TYPE=release BUILD_ID=QT OUT_DIR=out ============================================

$ m -j libgui_vendor … $ find $ANDROID_PRODUCT_OUT/system -name "libgui_vendor*" .../out/target/product/hawk/system/lib64/libgui_vendor.so .../out/target/product/hawk/system/lib/libgui_vendor.so

在 EVS HAL 實現中使用 binder

在 Android 8(及更高版本)中, /dev/binder設備節點成為框架進程獨有的,因此供應商進程無法訪問。相反,供應商進程應使用/dev/hwbinder並且必須將任何 AIDL 接口轉換為 HIDL。對於那些想要繼續在供應商進程之間使用 AIDL 接口的人,請使用綁定域/dev/vndbinder 。

| IPC 域 | 描述 |

|---|---|

/dev/binder | 具有 AIDL 接口的框架/應用程序進程之間的 IPC |

/dev/hwbinder | 具有 HIDL 接口的框架/供應商進程之間的 IPC 具有 HIDL 接口的供應商進程之間的 IPC |

/dev/vndbinder | 使用 AIDL 接口的供應商/供應商進程之間的 IPC |

雖然 SurfaceFlinger 定義了 AIDL 接口,但供應商進程只能使用 HIDL 接口與框架進程進行通信。將現有 AIDL 接口轉換為 HIDL 需要做大量工作。幸運的是,Android 提供了一種方法來選擇libbinder的 binder 驅動程序,用戶空間庫進程鏈接到該驅動程序。

diff --git a/evs/sampleDriver/service.cpp b/evs/sampleDriver/service.cpp

index d8fb3166..5fd02935 100644

--- a/evs/sampleDriver/service.cpp

+++ b/evs/sampleDriver/service.cpp

@@ -21,6 +21,7 @@

#include <utils/Errors.h>

#include <utils/StrongPointer.h>

#include <utils/Log.h>

+#include <binder/ProcessState.h>

#include "ServiceNames.h"

#include "EvsEnumerator.h"

@@ -43,6 +44,9 @@ using namespace android;

int main() {

ALOGI("EVS Hardware Enumerator service is starting");

+ // Use /dev/binder for SurfaceFlinger

+ ProcessState::initWithDriver("/dev/binder");

+

// Start a thread to listen to video device addition events.

std::atomic<bool> running { true };

std::thread ueventHandler(EvsEnumerator::EvsUeventThread, std::ref(running));

注意:供應商進程應在調用Process或IPCThreadState之前或在進行任何綁定器調用之前調用它。

SELinux 政策

如果設備實現是完整的高音,SELinux 會阻止供應商進程使用/dev/binder 。例如,EVS HAL 示例實現被分配給hal_evs_driver域,並且需要對binder_device域的 r/w 權限。

W ProcessState: Opening '/dev/binder' failed: Permission denied

F ProcessState: Binder driver could not be opened. Terminating.

F libc : Fatal signal 6 (SIGABRT), code -1 (SI_QUEUE) in tid 9145 (android.hardwar), pid 9145 (android.hardwar)

W android.hardwar: type=1400 audit(0.0:974): avc: denied { read write } for name="binder" dev="tmpfs" ino=2208 scontext=u:r:hal_evs_driver:s0 tcontext=u:object_r:binder_device:s0 tclass=chr_file permissive=0

但是,添加這些權限會導致構建失敗,因為它違反了在system/sepolicy/domain.te中為全高音設備定義的以下 neverallow 規則。

libsepol.report_failure: neverallow on line 631 of system/sepolicy/public/domain.te (or line 12436 of policy.conf) violated by allow hal_evs_driver binder_device:chr_file { read write };

libsepol.check_assertions: 1 neverallow failures occurred

full_treble_only(`

neverallow {

domain

-coredomain

-appdomain

-binder_in_vendor_violators

} binder_device:chr_file rw_file_perms;

')

binder_in_vendor_violators是一個用於捕捉錯誤和指導開發的屬性。它還可用於解決上述 Android 10 違規問題。

diff --git a/evs/sepolicy/evs_driver.te b/evs/sepolicy/evs_driver.te index f1f31e9fc..6ee67d88e 100644 --- a/evs/sepolicy/evs_driver.te +++ b/evs/sepolicy/evs_driver.te @@ -3,6 +3,9 @@ type hal_evs_driver, domain, coredomain; hal_server_domain(hal_evs_driver, hal_evs) hal_client_domain(hal_evs_driver, hal_evs) +# Allow to use /dev/binder +typeattribute hal_evs_driver binder_in_vendor_violators; + # allow init to launch processes in this context type hal_evs_driver_exec, exec_type, file_type, system_file_type; init_daemon_domain(hal_evs_driver)

將 EVS HAL 參考實現構建為供應商流程

作為參考,您可以對packages/services/Car/evs/Android.mk應用以下更改。請務必確認所有描述的更改都適用於您的實施。

diff --git a/evs/sampleDriver/Android.mk b/evs/sampleDriver/Android.mk

index 734feea7d..0d257214d 100644

--- a/evs/sampleDriver/Android.mk

+++ b/evs/sampleDriver/Android.mk

@@ -16,7 +16,7 @@ LOCAL_SRC_FILES := \

LOCAL_SHARED_LIBRARIES := \

android.hardware.automotive.evs@1.0 \

libui \

- libgui \

+ libgui_vendor \

libEGL \

libGLESv2 \

libbase \

@@ -33,6 +33,7 @@ LOCAL_SHARED_LIBRARIES := \

LOCAL_INIT_RC := android.hardware.automotive.evs@1.0-sample.rc

LOCAL_MODULE := android.hardware.automotive.evs@1.0-sample

+LOCAL_PROPRIETARY_MODULE := true

LOCAL_MODULE_TAGS := optional

LOCAL_STRIP_MODULE := keep_symbols

@@ -40,6 +41,7 @@ LOCAL_STRIP_MODULE := keep_symbols

LOCAL_CFLAGS += -DLOG_TAG=\"EvsSampleDriver\"

LOCAL_CFLAGS += -DGL_GLEXT_PROTOTYPES -DEGL_EGLEXT_PROTOTYPES

LOCAL_CFLAGS += -Wall -Werror -Wunused -Wunreachable-code

+LOCAL_CFLAGS += -Iframeworks/native/include

# NOTE: It can be helpful, while debugging, to disable optimizations

#LOCAL_CFLAGS += -O0 -g

diff --git a/evs/sampleDriver/service.cpp b/evs/sampleDriver/service.cpp

index d8fb31669..5fd029358 100644

--- a/evs/sampleDriver/service.cpp

+++ b/evs/sampleDriver/service.cpp

@@ -21,6 +21,7 @@

#include <utils/Errors.h>

#include <utils/StrongPointer.h>

#include <utils/Log.h>

+#include <binder/ProcessState.h>

#include "ServiceNames.h"

#include "EvsEnumerator.h"

@@ -43,6 +44,9 @@ using namespace android;

int main() {

ALOGI("EVS Hardware Enumerator service is starting");

+ // Use /dev/binder for SurfaceFlinger

+ ProcessState::initWithDriver("/dev/binder");

+

// Start a thread to listen video device addition events.

std::atomic<bool> running { true };

std::thread ueventHandler(EvsEnumerator::EvsUeventThread, std::ref(running));

diff --git a/evs/sepolicy/evs_driver.te b/evs/sepolicy/evs_driver.te

index f1f31e9fc..632fc7337 100644

--- a/evs/sepolicy/evs_driver.te

+++ b/evs/sepolicy/evs_driver.te

@@ -3,6 +3,9 @@ type hal_evs_driver, domain, coredomain;

hal_server_domain(hal_evs_driver, hal_evs)

hal_client_domain(hal_evs_driver, hal_evs)

+# allow to use /dev/binder

+typeattribute hal_evs_driver binder_in_vendor_violators;

+

# allow init to launch processes in this context

type hal_evs_driver_exec, exec_type, file_type, system_file_type;

init_daemon_domain(hal_evs_driver)

@@ -22,3 +25,7 @@ allow hal_evs_driver ion_device:chr_file r_file_perms;

# Allow the driver to access kobject uevents

allow hal_evs_driver self:netlink_kobject_uevent_socket create_socket_perms_no_ioctl;

+

+# Allow the driver to use the binder device

+allow hal_evs_driver binder_device:chr_file rw_file_perms;