The Android framework offers a variety of graphics rendering APIs for 2D and 3D that interact with manufacturer implementations of graphics drivers, so it is important to have a good understanding of how those APIs work at a higher level. This page introduces the graphics hardware abstraction layer (HAL) that those drivers are built on. Before continuing with this section, familiarize yourself with the following terms:

Canvas (API element)Surface object. The Canvas class has

methods for standard computer drawing of bitmaps, lines, circles, rectangles, text, and so on, and

is bound to a bitmap or surface. A canvas is the simplest, easiest way to draw 2D objects

on the screen. The base class is

Canvas.

android.graphics.drawable.

For more information about drawables and other resources, see

App resources overview.

android.opengl

and javax.microedition.khronos.opengles

packages expose OpenGL ES functionality.Surface (API element)Surface object. Use the

SurfaceView

class instead of the

Surface class directly.

SurfaceView (API element)View object that wraps a

Surface object for drawing, and exposes methods to specify its size and format

dynamically. A surface view provides a way to draw independently of the UI thread

for resource-intensive operations, such as games or camera previews, but it uses extra memory

as a result. A surface view supports both canvas and OpenGL ES

graphics. The base class for a SurfaceView object is

SurfaceView.

R.style

and prefaced with Theme_.View (API element)View class is the base class

for most layout components of an activity or dialog screen, such as text boxes

and windows. A View object receives calls from its parent object (see

ViewGroup) to draw itself, and informs its parent object

about its preferred size and located, which might not be respected by the

parent. For more information, see

View.

ViewGroup (API element)android.widget

package, but extend the

ViewGroup

class.

android.widget

package. Window (API element)Window

abstract class that specifies the elements of a generic

window, such as the look and feel, title bar text, and location and content of

menus. Dialogs and activities use an implementation of the

Window class to render a Window object. You don't need to implement

the Window class or use windows in your app.App developers draw images to the screen in three ways: with Canvas, OpenGL ES, or Vulkan.

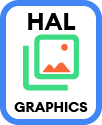

Android graphics components

No matter what rendering API developers use, everything is rendered onto a surface. The surface represents the producer side of a buffer queue that is often consumed by SurfaceFlinger. Every window that is created on the Android platform is backed by a surface. All of the visible surfaces rendered are composited onto the display by SurfaceFlinger.

The following diagram shows how the key components work together:

Figure 1. How surfaces are rendered.

The main components are described in the following sections.

Image stream producers

An image stream producer can be anything that produces graphic buffers for consumption. Examples include OpenGL ES, Canvas 2D, and mediaserver video decoders.

Image stream consumers

The most common consumer of image streams is SurfaceFlinger, the system service that consumes the currently visible surfaces and composites them onto the display using information provided by the Window Manager. SurfaceFlinger is the only service that can modify the content of the display. SurfaceFlinger uses OpenGL and the Hardware Composer (HWC) to compose a group of surfaces.

Other OpenGL ES apps can consume image streams as well, such as the camera app consuming a camera preview image stream. Non-GL apps can be consumers too, for example the ImageReader class.

Hardware Composer

The hardware abstraction for the display subsystem. SurfaceFlinger can delegate certain composition work to the HWC to offload work from OpenGL and the GPU. SurfaceFlinger acts as just another OpenGL ES client. So when SurfaceFlinger is actively compositing one buffer or two into a third, for instance, it's using OpenGL ES. This makes compositing lower power than having the GPU conduct all computation.

The Hardware Composer HAL conducts the other half of the work and is the central point for all Android graphics rendering. The HWC must support events, one of which is VSync (another is hotplug for plug-and-play HDMI support).

Gralloc

The graphics memory allocator (Gralloc) is needed to allocate memory requested by image producers. For details, see BufferQueue and Gralloc.

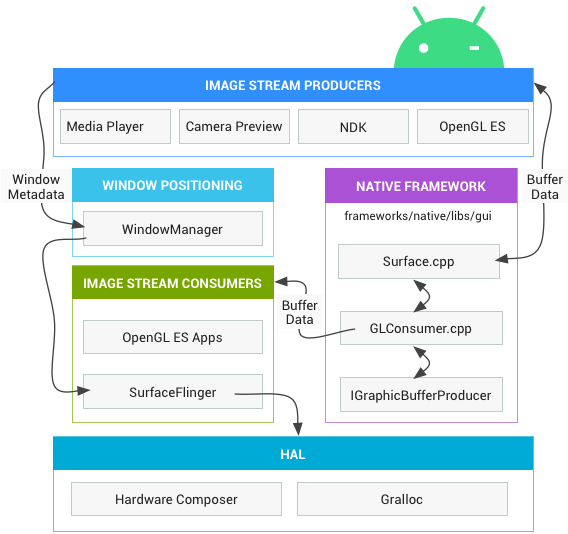

Data flow

The following diagram depicts the Android graphics pipeline:

Figure 2. Graphic data flow through Android.

The objects on the left are renderers producing graphics buffers, such as the home screen, status bar, and system UI. SurfaceFlinger is the compositor and the HWC is the composer.

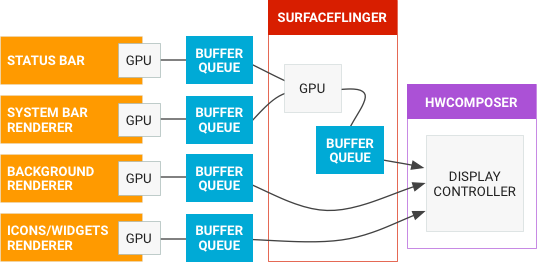

BufferQueue

BufferQueues provide the glue between the Android graphics components. These are a pair of queues that mediate the constant cycle of buffers from the producer to the consumer. After the producers hand off their buffers, SurfaceFlinger is responsible for compositing everything onto the display.

The following diagram illustrates the BufferQueue communication process:

Figure 3. BufferQueue communication process.

BufferQueue contains the logic that ties image stream producers and image stream consumers together. Some examples of image producers are the camera previews produced by the camera HAL or OpenGL ES games. Some examples of image consumers are SurfaceFlinger or another app that displays an OpenGL ES stream, such as the camera app displaying the camera viewfinder.

BufferQueue is a data structure that combines a buffer pool with a queue and

uses Binder inter-process communication (IPC) to pass buffers between processes. The producer interface, or

what you pass to somebody who wants to generate graphic buffers, is

IGraphicBufferProducer (part of SurfaceTexture).

BufferQueue is often used to render to a Surface and consume with a GL

Consumer, among other tasks.

BufferQueue can operate in three different modes:

To conduct most of this work, SurfaceFlinger acts as just another OpenGL ES client. So when SurfaceFlinger is actively compositing one buffer or two into a third, for instance, it's using OpenGL ES.

The Hardware Composer HAL conducts the other half of the work. This HAL acts as the central point for all Android graphics rendering.