Android runtime (ART) includes a just-in-time (JIT) compiler with code profiling that continually improves the performance of Android applications as they run. The JIT compiler complements ART's current ahead-of-time (AOT) compiler and improves runtime performance, saves storage space, and speeds application and system updates. It also improves upon the AOT compiler by avoiding system slowdown during automatic application updates or recompilation of applications during over-the-air (OTA) updates.

Although JIT and AOT use the same compiler with a similar set of optimizations, the generated code might not be identical. JIT makes use of runtime type information, can do better inlining, and makes on stack replacement (OSR) compilation possible, all of which generates slightly different code.

JIT architecture

JIT compilation

JIT compilation involves the following activities:

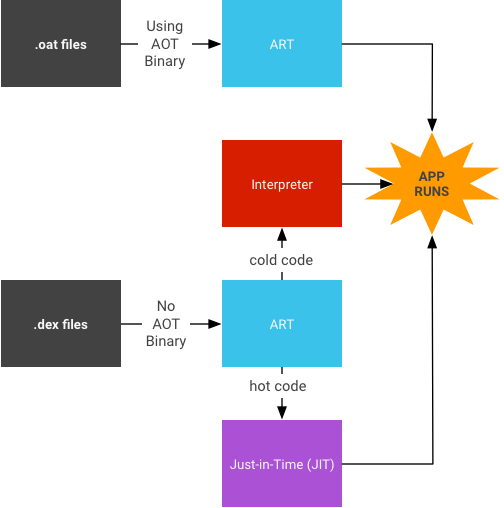

- The user runs the app, which then triggers ART to load the

.dexfile.- If the

.oatfile (the AOT binary for the.dexfile) is available, ART uses it directly. Although.oatfiles are generated regularly, they don't always contain compiled code (AOT binary). - If the

.oatfile does not contain compiled code, ART runs through JIT and the interpreter to execute the.dexfile.

- If the

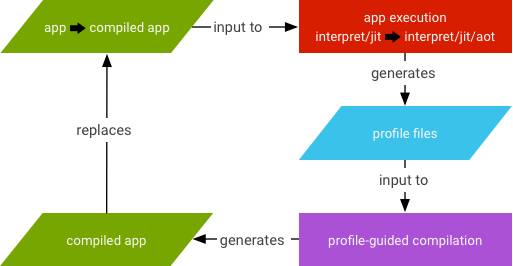

- JIT is enabled for any application that is not compiled according to the

speedcompilation filter (which says "compile as much as you can from the app"). - The JIT profile data is dumped to a file in a system directory that only the application can access.

- The AOT compilation (

dex2oat) daemon parses that file to drive its compilation.

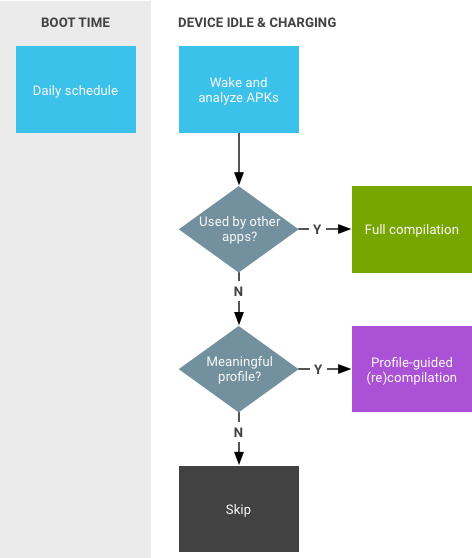

Figure 3. JIT daemon activities.

The Google Play service is an example used by other applications that behave similar to shared libraries.

JIT workflow

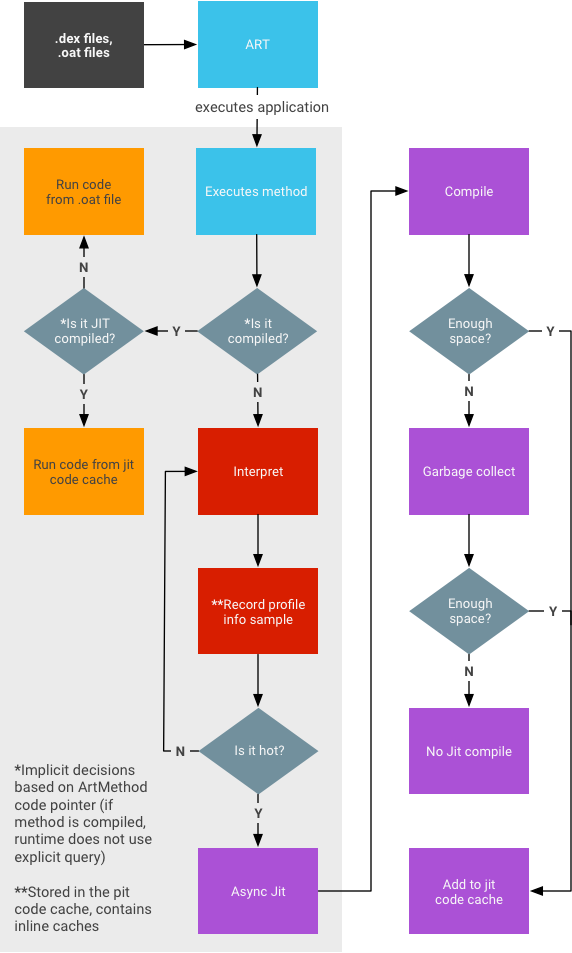

- Profiling information is stored in the code cache and subjected to garbage

collection under memory pressure.

- There is no guarantee a snapshot taken when the application was in the background will contain complete data (i.e., everything that was JITed).

- There is no attempt to ensure everything is recorded (as this can impact runtime performance).

- Methods can be in three different states:

- interpreted (dex code)

- JIT compiled

- AOT compiled

- The memory requirement to run JIT without impacting foreground app performance depends upon the app in question. Large apps require more memory than small apps. In general, large apps stabilize around 4 MB.

Turn on JIT logging

To turn on JIT logging, run the following commands:

adb rootadb shell stopadb shell setprop dalvik.vm.extra-opts -verbose:jitadb shell start

Disable JIT

To disable JIT, run the following commands:

adb rootadb shell stopadb shell setprop dalvik.vm.usejit falseadb shell start

Force compilation

To force compilation, run the following:

adb shell cmd package compile

Common use cases for force compiling a specific package:

- Profile-based:

adb shell cmd package compile -m speed-profile -f my-package

- Full:

adb shell cmd package compile -m speed -f my-package

Common use cases for force compiling all packages:

- Profile-based:

adb shell cmd package compile -m speed-profile -f -a

- Full:

adb shell cmd package compile -m speed -f -a

Clear profile data

On Android 13 or earlier

To clear local profile data and remove compiled code, run the following:

adb shell pm compile --reset

On Android 14 or later

To clear local profile data only:

adb shell pm art clear-app-profiles

Note: Unlike the command for Android 13 or earlier, this command doesn't clear external profile data (`.dm`) that is installed with the app.

To clear local profile data and remove compiled code generated from local profile data (i.e., to reset to the install state), run the following:

adb shell pm compile --reset

Note: This command doesn't remove compiled code generated from external profile data (`.dm`) that is installed with the app.

To clear all compiled code, run this command:

adb shell cmd package compile -m verify -f

Note: This command retains local profile data.