為了支援可擴充、效能佳且靈活的持續整合儀表板,VTS 儀表板後端必須經過仔細設計,並充分瞭解資料庫功能。Google Cloud Datastore 是 NoSQL 資料庫,可提供交易 ACID 保證和最終一致性,以及實體群組內的同步一致性。不過,其結構與 SQL 資料庫 (甚至是 Cloud Bigtable) 大不相同,因為它不是由表格、資料列和儲存格組成,而是由類型、實體和屬性組成。

下列各節將概略說明資料結構和查詢模式,協助您為 VTS 資訊主頁網頁服務建立有效的後端。

實體

下列實體會儲存 VTS 測試執行作業的摘要和資源:

- 測試實體。儲存特定測試的測試執行作業中繼資料。其鍵是測試名稱,屬性則包括失敗次數、通過次數,以及警示工作更新時的測試案例中斷清單。

- 測試執行實體。包含特定測試執行作業的中繼資料。必須儲存測試開始和結束時間戳記、測試版本 ID、通過和失敗測試案例的數量、執行類型 (例如提交前、提交後或本機)、記錄連結清單、主機名稱,以及涵蓋率摘要計數。

- 裝置資訊實體。包含測試執行期間使用的裝置詳細資料。其中包含裝置版本 ID、產品名稱、版本目標、分支和 ABI 資訊。這會與測試執行實體分開儲存,以便以一對多的方式支援多裝置測試執行。

- 剖析點執行實體。匯總測試執行期間針對特定剖析點收集的資料。它會說明剖析資料的軸標籤、剖析點名稱、值、類型和迴歸模式。

- 涵蓋實體。說明為單一檔案收集的覆蓋率資料。其中包含 Git 專案資訊、檔案路徑,以及來源檔案中每行涵蓋率的清單。

- 測試案例執行實體。說明測試執行期間的特定測試案例結果,包括測試案例名稱及其結果。

- 使用者收藏內容實體。每個使用者訂閱項目可在實體中表示,其中包含對測試的參照,以及從 App Engine 使用者服務產生的使用者 ID。這可讓您有效地進行雙向查詢 (也就是針對所有訂閱測試的使用者,以及使用者收藏的所有測試)。

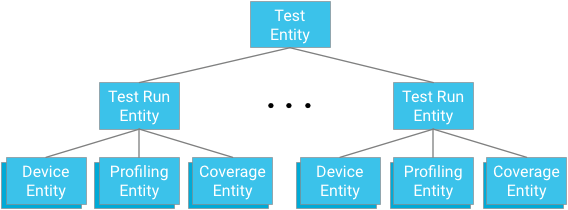

實體分組

每個測試模組都代表實體群組的根目錄。測試執行實體是這個群組的子項,也是與個別測試和測試執行祖系相關的裝置實體、剖析點實體和涵蓋率實體的父項。

重點:設計祖系關係時,您必須在提供有效且一致的查詢機制,以及資料庫強制執行的限制之間取得平衡。

優點

一致性規定可確保未來的作業在交易提交前不會看到交易的效果,且過去的交易會對目前的作業可見。在 Cloud Datastore 中,實體群組會在群組內建立同步一致的讀取和寫入區塊,在本例中,這些區塊是所有測試執行作業和與測試模組相關的資料。這項機制具備下列優點:

- 快訊工作對測試模組狀態的讀取和更新作業可視為原子作業

- 保證在測試模組中一致顯示測試案例結果

- 加快祖系樹內的查詢速度

限制

不建議以每秒超過一個實體的速度寫入實體群組,因為部分寫入作業可能會遭到拒絕。只要警示工作和上傳作業的速度不超過每秒一次寫入,結構就會穩固,並保證強一致性。

最終,每秒一個測試模組的寫入上限是合理的,因為測試執行作業通常需要至少一分鐘,其中包含 VTS 架構的額外負擔;除非測試會在超過 60 個不同的主機上持續同時執行,否則不會發生寫入瓶頸。由於每個模組都是測試計畫的一部分,通常需要超過一小時才能完成,因此這種情況更不可能發生。如果主機同時執行測試,導致對同一主機的寫入作業短暫爆增,系統就能輕鬆處理異常情況 (例如擷取寫入錯誤並重試)。

資源調度考量

測試執行作業不一定需要將測試設為父項 (例如,它可以使用其他鍵,並將測試名稱和測試開始時間設為屬性);不過,這會將強一致性換成最終一致性。舉例來說,警示工作可能不會看到測試模組中最近一次測試執行作業的互相一致快照,這表示全域狀態可能無法完全準確呈現測試執行作業的順序。這也可能會影響單一測試模組內的測試執行作業顯示畫面,因為這可能不是執行序列的一致快照。快照最終會一致,但無法保證會使用最新資料。

測試案例

另一個潛在的瓶頸是包含許多測試案例的大型測試。兩個運作限制是實體群組內的寫入總處理量上限為每秒一次,交易大小上限為 500 個實體。

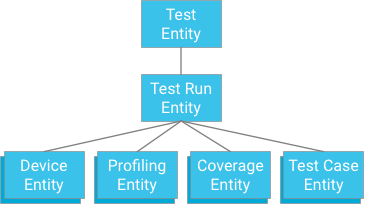

一種方法是指定測試案例,讓測試執行作業成為祖系 (類似儲存涵蓋率資料、剖析資料和裝置資訊的方式):

雖然這種做法可提供原子性和一致性,但會對測試設下嚴格限制:如果交易限制為 500 個實體,則測試最多只能有 498 個測試案例 (假設沒有涵蓋率或剖析資料)。如果測試超過這個限制,單一交易就無法一次寫入所有測試案例結果,而將測試案例分成個別交易,則可能會超過每秒一個迭代的實體群組寫入總處理量上限。由於這個解決方案無法在不犧牲效能的情況下順利擴充,因此不建議採用。

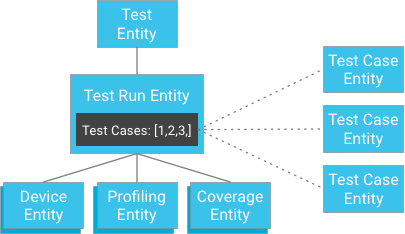

不過,您可以將測試案例個別儲存,並將其鍵提供給測試執行作業,而非將測試案例結果儲存為測試執行作業的子項 (測試執行作業包含測試案例實體的 ID 清單):

乍看之下,這似乎會違反強一致性保證。不過,如果用戶端有測試執行實體和測試案例 ID 清單,就不需要建構查詢,而是可以直接透過 ID 取得測試案例,這類 ID 一律保證一致。這種方法可大幅減輕測試執行可能有的測試案例數量限制,同時達到同步一致性,且不會影響實體群組內的寫入作業。

資料存取模式

VTS 資訊主頁採用下列資料存取模式:

- 使用者收藏:您可以使用等於篩選器,針對使用者最愛實體 (具有特定 App Engine 使用者物件做為屬性) 進行查詢。

- 測試產品資訊。對測試實體執行簡易查詢。為了減少用於轉譯首頁的頻寬,您可以在通過和失敗次數上使用投影,藉此省略快訊工作使用的失敗測試案例 ID 和其他中繼資料,因為這些項目的清單可能會很長。

- 測試執行作業。查詢測試執行實體時,必須對索引鍵 (時間戳記) 進行排序,並可能對測試執行屬性進行篩選,例如版本 ID、通過次數等。透過使用測試實體索引鍵執行祖系查詢,讀取作業就能達到高度一致性。此時,您可以使用儲存在測試執行屬性中的 ID 清單,擷取所有測試案例結果;由於資料儲存庫取得作業的特性,這也保證會產生強一致性的結果。

- 剖析資料和涵蓋率資料。您可以查詢與測試相關聯的剖析或涵蓋率資料,而不需要擷取任何其他測試執行資料 (例如其他剖析/涵蓋率資料、測試案例資料等)。使用測試測試和測試執行實體鍵的祖系查詢,會擷取測試執行期間記錄的所有剖析點;如果同時篩選剖析點名稱或檔案名稱,則可擷取單一剖析或涵蓋率實體。根據祖系查詢的特性,這項作業具有強一致性。

如要進一步瞭解這些資料模式的 UI 和實際操作螢幕截圖,請參閱「VTS 資訊主頁 UI」。